Purging all unused or dangling images, containers, volumes, and networks. timedelta from airflow import DAG from import PythonOperator from .operators.sparksubmit import SparkSubmitOperator from pyspark.sql import SparkSession from datetime import datetime.Run airflow commands on official Airflow docker-compose. Using Apache Airflow’s Docker Operator with Amazon’s Container Repository MaBrian Campbell 5 Comments Last year, Lucid Software’s data science and analytics teams moved to Apache Airflow for scheduling tasks. How can I run jupyter in airflow with docker-compose 2. How to run docker command in this Airflow docker container 1. No module named airflow when initializing Apache airflow docker. Trials with airflow2 inside docker with DockerOperator (docker inside docker) Using apache airflow docker operator with rootless docker.with edits for permissions for docker installation, which failed miserably.bashrc, which causes the issue on server restart as the variables are not exported (add the following to a startup script)Ĭd /home/ubuntu/dags_root/airflow2-dockeroperator-nodejs-gitlab/dags & source /home/ubuntu/airflow/airflow_venv/bin/activate & git pull & pip install -r /home/ubuntu/dags_root/airflow2-dockeroperator-nodejs-gitlab/dags/requirements.txt & sudo systemctl stop rvice & sudo systemctl start rvice & sudo systemctl stop rvice & sudo systemctl start rvice Unsuccessful trials systemd unit doesn't interpolate variables and it will ignore lines starting with "export" in.Running Apache-Airflow as a service in virtual environment.After DockerSwarmOperator task gets completed, the task remains running in Airflow while the corresponding container has been exited. This completes DAG run with DockerOperator in Airflow, however keep in mind that minimum AWS EC2 t2.medium equivalent instance will be required on the server to just run the DAGs with DockerOperator in Airflow 1 I'm using Airflow for running tasks in docker swarm using DockerSwarmOperator. Task log from DAG run task details graph view for the successful run

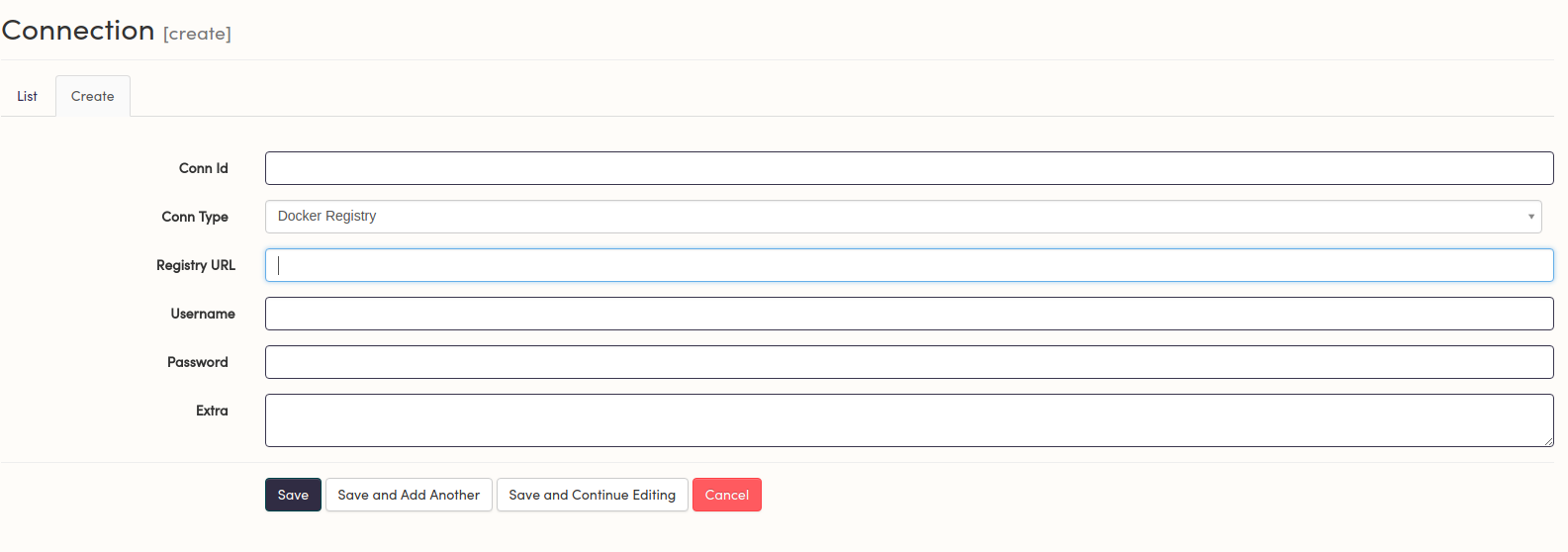

Task detail from DAG run task details graph view for the successful run Your DAG is now available, first enable/unpause before triggering and then trigger itĭAG run task details graph view for the successful run docker.stringify(line)source Make sure string is returned even if bytes are passed. Functions stringify (line) Make sure string is returned even if bytes are passed. Basically, this step generates the required file at `~/.docker/config.json`Ĭd ~/dags_root/ & git clone & cd airflow2-dockeroperator-nodejs-gitlab/dags & pip install -r requirements.txt Implements Docker operator Module Contents Classes DockerOperator Execute a command inside a docker container. You need to login to the Gitlab container registry from docker on your local machine (this step also needs to be done on the server).Create sample project in Gitlab on your local machine.Sample URLs where you can find container registry for your repo based on the repo being used for the post This post uses connection to Gitlab container registry via docker remote API, without using connection from Airflow via the docker_conn_id parameter.This completes docker 20.10.7 installation and setup.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed